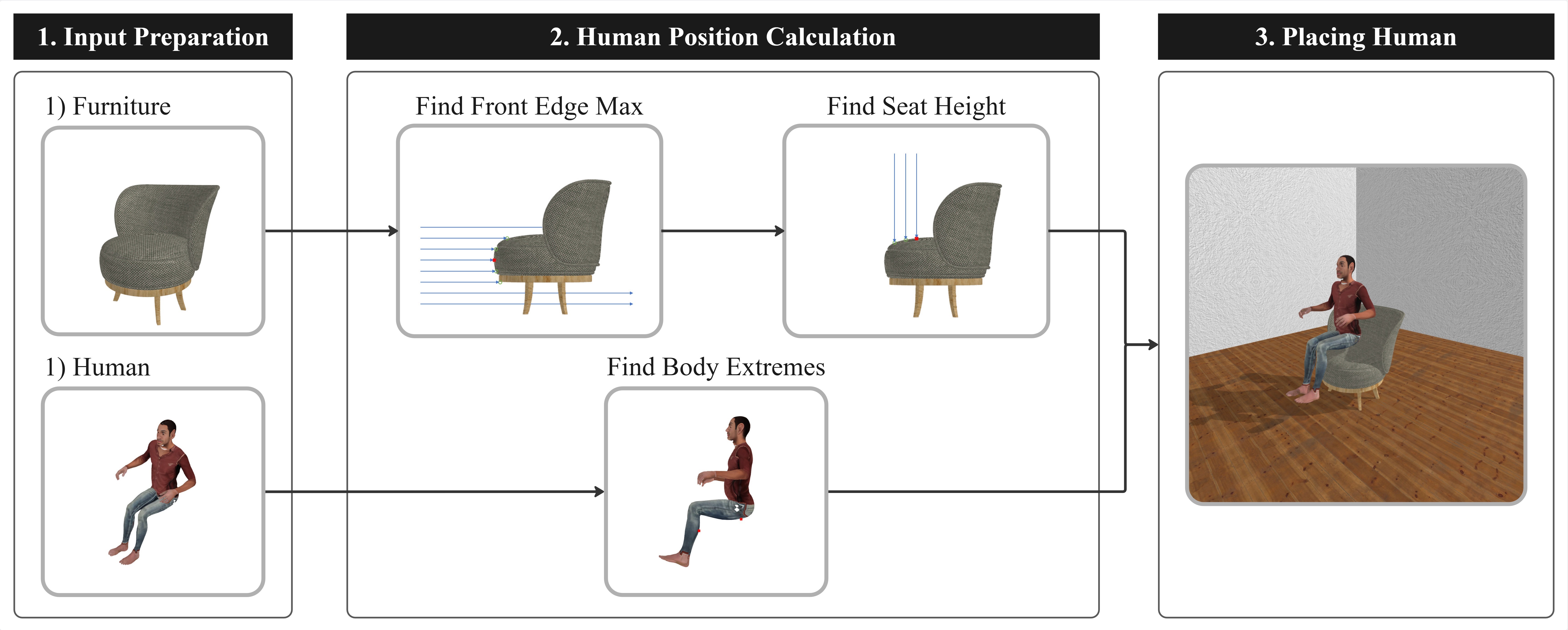

human_description: "A medium-height, broad-shouldered person with a muscular build in a sitting posture."

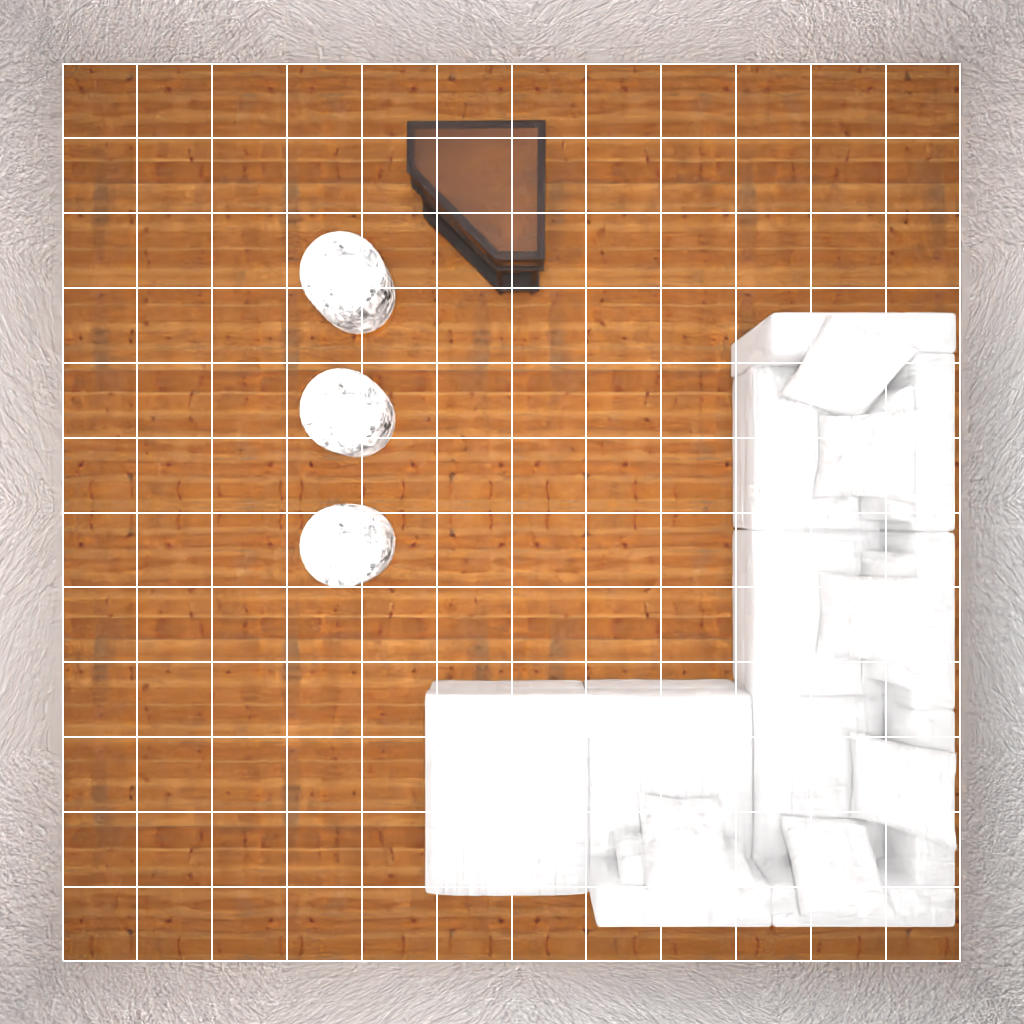

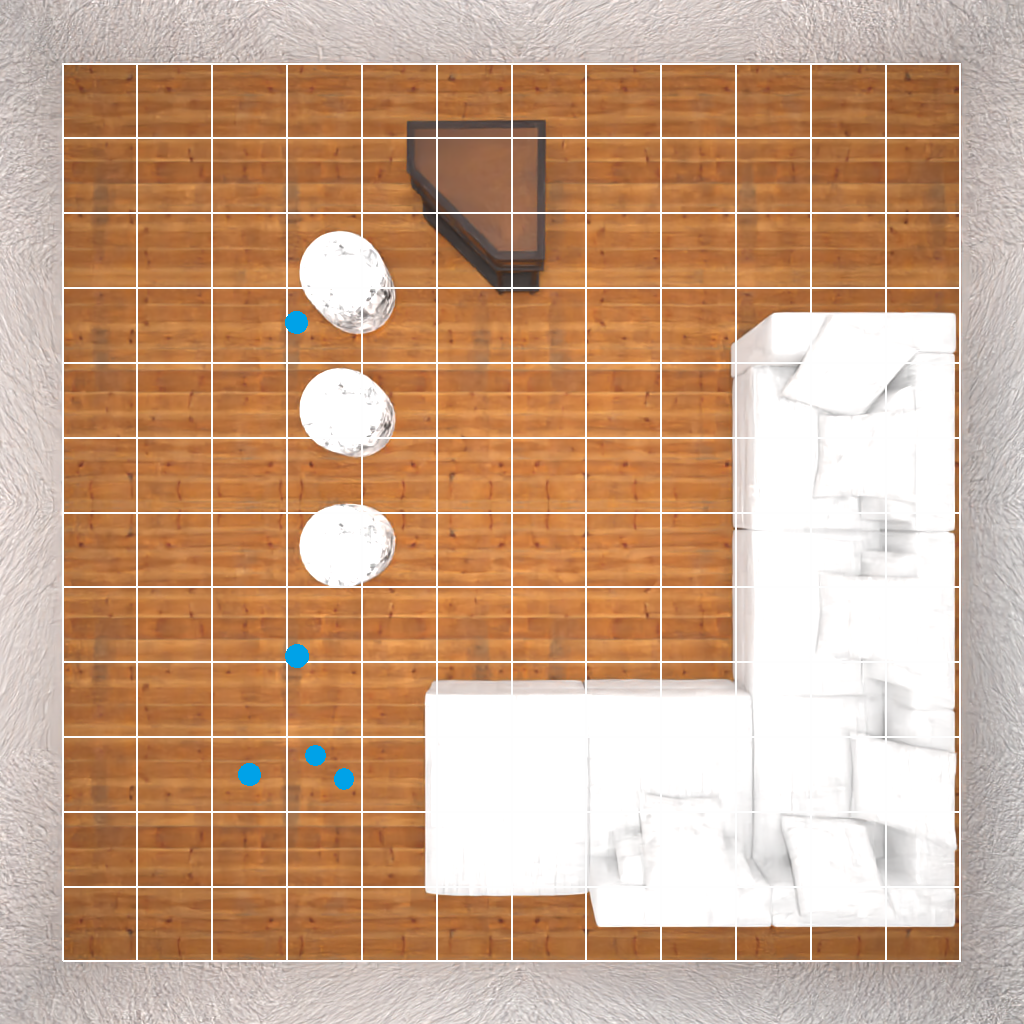

room_json: {

"floor_objects": [

{

"assetId": "8813acda-0658-4cda-8220-750ec96eba99",

"id": null,

"kinematic": true,

"position": {

"x": 4.6991549384593965,

"y": 0.5201099067926407,

"z": 2.2

},

"rotation": {

"x": 0,

"y": 90,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

604.5,

102.07508444786072

],

[

604.5,

337.9249155521393

],

[

335.3309876918793,

337.9249155521393

],

[

335.3309876918793,

102.07508444786072

]

],

"object_name": "bed-0"

},

{

"assetId": "a8e38746-2e50-4546-96a0-dc7f75c2074f",

"id": null,

"kinematic": true,

"position": {

"x": 4.2,

"y": 0.8254517614841461,

"z": 0.28584778785705567

},

"rotation": {

"x": 0,

"y": 0,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

493.4748739004135,

-4.5

],

[

493.4748739004135,

61.66955757141113

],

[

346.5251260995865,

61.66955757141113

],

[

346.5251260995865,

-4.5

]

],

"object_name": "dresser-0"

},

{

"assetId": "863316e2-050e-4787-822e-c4a6202a9f32",

"id": null,

"kinematic": true,

"position": {

"x": 2.6,

"y": 0.39989787340164185,

"z": 0.5792869079113007

},

"rotation": {

"x": 0,

"y": 0,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

322.5156092643738,

-4.5

],

[

322.5156092643738,

120.35738158226013

],

[

197.48439073562622,

120.35738158226013

],

[

197.48439073562622,

-4.5

]

],

"object_name": "armchair-1"

},

{

"assetId": "94149f77-9373-4637-9972-0ed77f2fa4bd",

"id": null,

"kinematic": true,

"position": {

"x": 2.6,

"y": 0.3885276548098773,

"z": 3.0

},

"rotation": {

"x": 0,

"y": 180,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

314.7334152460098,

354.77037757635117

],

[

314.7334152460098,

245.22962242364883

],

[

205.26658475399017,

245.22962242364883

],

[

205.26658475399017,

354.77037757635117

],

[

314.7334152460098,

354.77037757635117

]

],

"object_name": "table-0"

},

{

"assetId": "18c10bfa-dfe4-455b-85af-7ff839a0a9c6",

"id": null,

"kinematic": true,

"position": {

"x": 2.6,

"y": 0.18432170641608536,

"z": 3.9999999999999996

},

"rotation": {

"x": 0,

"y": 180,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

285.7096956670284,

421.72083139419556

],

[

285.7096956670284,

378.27916860580444

],

[

234.29030433297157,

378.27916860580444

],

[

234.29030433297157,

421.72083139419556

],

[

285.7096956670284,

421.72083139419556

]

],

"object_name": "armchair-0"

},

{

"assetId": "05a04d29-8805-4b8c-b69b-6e353b07b725",

"id": null,

"kinematic": true,

"position": {

"x": 3.8,

"y": 0.5453844904986909,

"z": 4.0

},

"rotation": {

"x": 0,

"y": 180,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

438.92294228076935,

453.73225688934326

],

[

438.92294228076935,

346.26774311065674

],

[

321.07705771923065,

346.26774311065674

],

[

321.07705771923065,

453.73225688934326

],

[

438.92294228076935,

453.73225688934326

]

],

"object_name": "chair-0"

},

{

"assetId": "9a6705ba-471f-4398-ae81-c2984eb95a1b",

"id": null,

"kinematic": true,

"position": {

"x": 1.6,

"y": 0.900574088213034,

"z": 4.2

},

"rotation": {

"x": 0,

"y": 90,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

189.59192872047424,

379.5541340112686

],

[

189.59192872047424,

460.4458659887314

],

[

130.40807127952576,

460.4458659887314

],

[

130.40807127952576,

379.5541340112686

],

[

189.59192872047424,

379.5541340112686

]

],

"object_name": "floor lamps-1"

},

{

"assetId": "9a6705ba-471f-4398-ae81-c2984eb95a1b",

"id": null,

"kinematic": true,

"position": {

"x": 4.8,

"y": 0.900574088213034,

"z": 4.2

},

"rotation": {

"x": 0,

"y": 90,

"z": 0

},

"material": null,

"roomId": null,

"vertices": [

[

509.59192872047424,

379.5541340112686

],

[

509.59192872047424,

460.4458659887314

],

[

450.40807127952576,

460.4458659887314

],

[

450.40807127952576,

379.5541340112686

],

[

509.59192872047424,

379.5541340112686

]

],

"object_name": "floor lamps-0"

}

],

"wall_objects": []

}